Conductor

You describe the outcome. The system compiles the plan.

Today, you are the orchestrator. You draw the flowchart, wire the nodes, debug the timing. Every automation platform makes you think like a machine — sequential steps, one LLM call at a time, 24+ seconds for a 10-node flow.

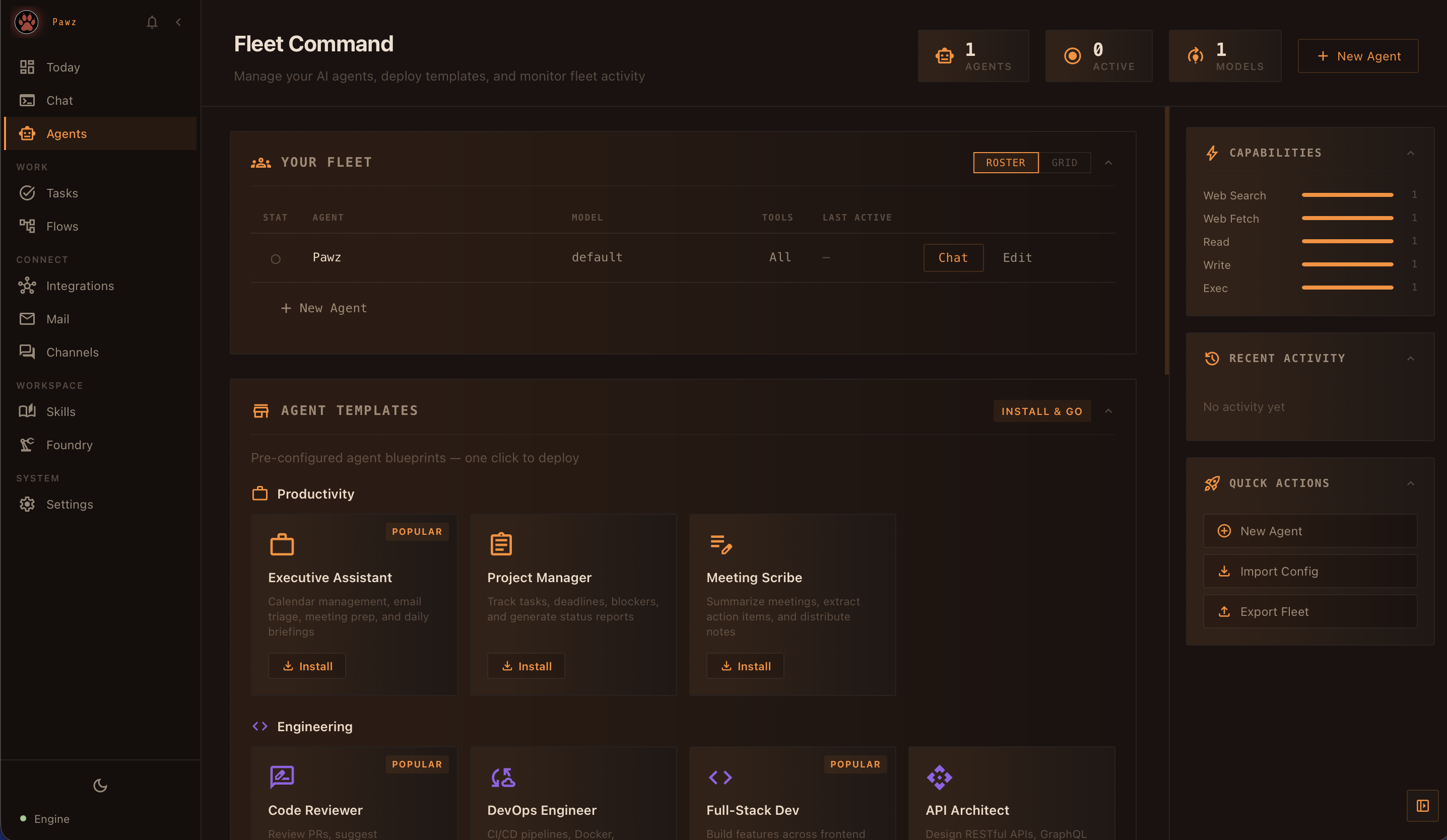

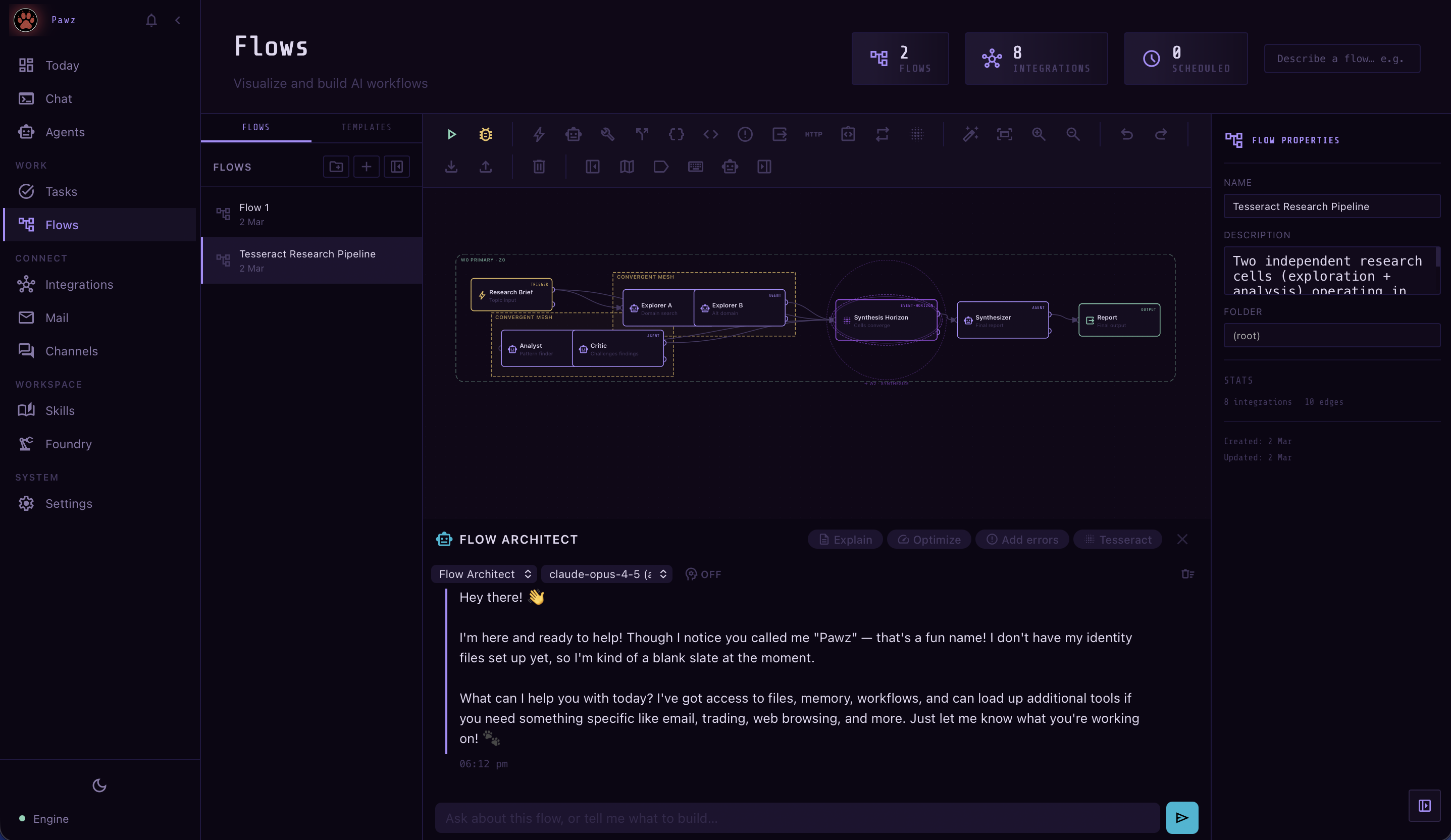

Conductor reads your intent and compiles it into an optimized execution strategy before anything runs. Five primitives — Collapse, Extract, Parallelize, Converge, and Tesseract — let the system decide how to run, not you. The same flow finishes in 4 seconds with a third of the LLM calls.

Converge lets agents debate and self-correct in cycles — something no DAG-based platform can even express. Tesseract adds a fourth dimension: parallel workflow cells operating across depth and phase, converging at event horizons. You stopped drawing flowcharts. You started stating outcomes.